Java is very much in full retreat.Professor Ronald Loui has an interesting article on the rise of scripting languages (In Praise of Scripting: Real Programming Pragmatism) in the July 2008 issue of IEEE Computer. It claims scripting languages such as Perl, Python, and Javascript have dramatically fulfilled their early promise, provide many benefits, and are poised to take over the lead from Java. However, the academic programming language community is stuck in theory and hasn't recognized the ascendence of scripting languages.

-- R. Loui

I agree that scripting languages are on the rise. Most people would agree that they provide rapid development, higher levels of abstraction, and brevity that helps the programmer. The article also describes how scripting languages can be a performance win, since they can allow experimentation and implementation of efficient algorithms that would be too painful in Java or C++. So even if C++ is faster on the micro-benchmark level, a programmer using a scripting language may end up with faster algorithms overall. I've argued somewhat controversally that Arc is too slow for my programming problems, so I remain unconvinced that basic performance can be ignored entirely.

As for the claim that Java is in full retreat, it strikes me as wishful thinking. (I'd believe "slow decline" though.) It will be interesting to check back on this claim in 5 years.

I personally believe that CS1 [freshman computer science] Java is the greatest single mistake in the history of computing curricula.The article suggests good languages for teaching introductory computer science are gawk, Javscript, PHP, and ASP, but Python is emerging as a consensus for the best freshman programming language. This is the hardest part of the article for me to swallow. The idea of writing real programs in Awk never occurred to me, and I remain skeptical even though the author claims it works well. For those who would suggest Scheme as an introductory programming language, it was displaced as a dominant freshman language by Java a decade ago, and is apparently no longer considered an option.

-- R. Loui

I can't argue with the author's claim that student learning is enhanced by experimenting, writing code, and getting hands-on experience, and that scripting languages make this faster and easier.

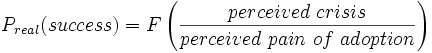

Python and Ruby have the enviable properties that almost no one dislikes them, and almost everyone respects them.In Why your favorite language is unpopular I discussed how the Change Function model can explain the success of programming languages based on maximizing the crisis solved and minimizing the perceived pain of adoption. I can apply this model applies to scripting languages as well:

-- R. Loui

Magnitude of crisis solved by Tcl/Tk: High - How to add a scripting language to a C program. How to add a GUI to a C program without painful X11 and Motif code.

Total Perceived Pain of Adoption: Low - Link Tcl in with your C program and add a few hooks. Create the GUI with trivial scripts.

Magnitude of crisis solved by Perl: High - How to quickly write CGI scripts. How to solve problems too complex for shell scripts. How to process files. How to develop quickly and iteratively.

Total Perceived Pain of Adoption: Low - Apart from looking like line noise, Perl is easy to get started with, is well integrated with Unix, has the definitive regex implementation, and has libraries for almost everything.

My point is that these languages solved specific painful problems and had low pain of adoption. As a result, they were much more successful than beautiful, powerful languages that were less able to directly solve painful problems or were more painful to adopt.

The real reason why academics were blindsided by scripting is their lack of practicality.A major thrust of the article is that academics are too concerned with theoretical issues of syntax and semantics, rather than pragmatic issues of what a language can achive quickly, inexpensively, and practically. Academics are said to be too tied to theoretical concepts such as object-oriented programming and strong typing, and are missing the real-world benefits of scripting languages.

-- R. Loui

(Interestingly, Rob Pike made a similar argument against academics in the context of operating systems software (Systems Software Research is Irrelevant), stating that academic research is irrelevant and the real innovation is in industry. Since I have friends doing academic OS research, I should add a disclaimer here that I don't necessarily agree.)

One measure of pragmatics raised by the paper is how well does a language work with other Unix tools. I think the importance of this is underappreciated. In particular, I view this as a significant barrier to adoption of Arc. Running Arc as a shell script instead of a REPL is nontrivial (as is the case with many Lisp and Scheme implementations). Running an external program from Arc is clunky, even though it is often necessary to actually get things done (Kens' law), and real pipes are missing from Arc entirely.

Java's integration with Unix also has painful gaps - where's getpid() for instance? Why is JNI so difficult compared to calling native code from C#? I blame Sun's pure-Java platform independence ideology, and I'm surprised it hasn't hurt Java more.

On the other hand, Python and Perl provide a remarkable degree of integration, which I view as a key factor in their success. Likewise, Visual Basic is highly integrated with the Windows environment and highly successful there.

In conclusion, Loui's paper raises numerous interesting points about the success of scripting languages. I expect that the reasons for the rise of scripting languages will only get stronger, and languages that don't support the scripting model will have an increasingly harder time gaining adoption.

Note: quotes above are from the preprint and may not match the published article.